By Colin Barnden

Levels of driving automation, as defined in SAE Standard J3016, are too widely misused and abused to be informative. Let’s look briefly at the confusion created by the standard and consider an alternative.

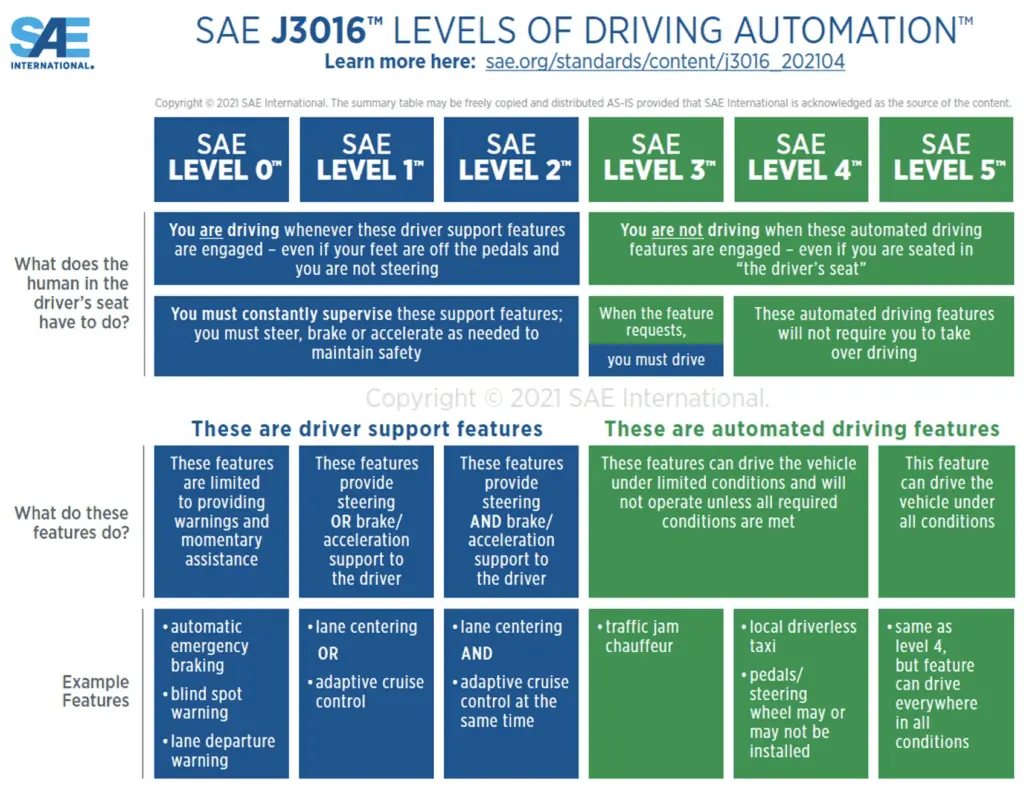

The well-known J3016 infographic is shown above. The problem with it is quite simple: By assigning numbers to levels, the standard creates the impression of a roadmap.

Actually, there are two separate roadmaps:

- Levels 0 to 2: Increased driving automation.

- Levels 3 to 5: Increased competence of the machine driver, coupled with decreased reliance on humans to supervise the driving task.

The trouble with a roadmap is that it implies there’s a destination — in this case, Level 5. Intentionally or not, J3016 created a “race to Level 5,” which has dominated both the technology and automotive industries for years.

In the beginning, no one really knew what achieving Level 5 actually meant. But tech startups, drunk on billions of dollars of venture capital funding and preaching a mission to “save lives,” knew the winner would be the one to get there first.

Braggadocio à outrance became the order of the day. Level 4? Forget it. Level 3? Skipped. Level 2? Passé. Level 1? Easy. Level 0? Extinct.

Recommended: How Safe Are We With ADAS?

The outcome was an epic misallocation of capital and resources. Vital and achievable life-saving technologies that could make human drivers safer, such as driver monitoring systems (DMS) and advanced automatic emergency braking, were starved of funding. But anything “self-driving,” particularly machine learning, LiDAR and “full autonomy,” was flooded with cash.

It fell to Phil Koopman, associate professor at Carnegie Mellon University, to point out that J3016 is merely a terminology standards document. Indeed, SAE International originally described J3016 simply as a “Taxonomy and Definitions for Terms Related to On-Road Motor Vehicle Automated Driving Systems.”

For the foreseeable future, humans will be required to fulfill the task of automation supervisor.

Hence, J3016 is not fit for purpose and must be withdrawn.

Fortunately, Koopman has not only called out the limitations of the standard but has also proposed an alternative, switching out the six levels of driving automation for four vehicle operational modes: driver assistance, supervised automation, autonomous operation, and vehicle testing.

The modes are more tightly defined than the levels, are much harder to manipulate, and don’t imply a continuum. Let’s look at each in turn.

Driver assistance

In this mode, as Koopman describes it, “the driver always remains in the steering loop, exerting at least some form of sustained control over lane keeping and turns to ensure active engagement and situational awareness.” Driver liability is the same as for conventional vehicles.

I define driver assistance as technology that provides audible or visual driver warnings, or short-duration (typically 1 to 3 seconds) longitudinal speed assist and/or lateral lane support.

Typical technologies include automatic emergency braking, forward-collision warning, lane-keep assist and lane departure warning.

Supervised automation

Here, according to Koopman’s definition, “the vehicle controls speed and lane keeping. A human driver handles things the system is not designed to address.”

I define supervised automation as a system that provides automated longitudinal and lateral vehicle control, while the human driver fulfills the role of automation supervisor and thus is liable for the driving task at all times. Direct, robust DMS must be in place to maintain the driver’s attention state and engagement level in the supervisory role.

The operational design domain is limited to divided highways only, and these systems are not operated in proximity to vulnerable road users. Examples include GM Super Cruise, Ford BlueCruise, and Mercedes-Benz Drive Pilot.

Autonomous operation

Here’s how Koopman describes this mode: “The whole vehicle is completely capable of operation with no human monitoring.” Under this definition, neither systems that rely on a machine-to-human handover nor systems covered by UN Regulation 157 for Automated Lane Keeping Systems meet the condition for autonomous operation; therefore, such systems must be included under supervised automation.

Truly autonomous operation dictates that “if something goes wrong, the vehicle is entirely responsible for alerting humans that it needs assistance and for operating safely until that assistance is available,” Koopman states. His description of autonomous operation goes further, to include operation under remote assistance, or tele-operation:

A vehicle that received remote assistance would still be exhibiting Autonomous Operation if (a) the vehicle requests assistance whenever needed without any person being responsible for noticing there is a problem, and (b) the vehicle retains responsibility for safety even with assistance. In some cases, autonomous operation might change mode to remotely supervised operation if a remote operator becomes responsible for safety.

It is plausible that a fifth vehicle operational mode could be introduced to distinguish between an autonomous vehicle designed to fall back on remote (human) supervised operation and an autonomous vehicle that is capable of, and liable for, resolving all driving scenarios without any human assistance.

Vehicle testing

Here, “a trained safety driver supervises the operation of an automation testing platform,” according to Koopman. “The organization performing testing is responsible for safety in accordance with a Safety Management System that includes driver qualification, driver training, and testing protocols.”

No trend to autonomous operation

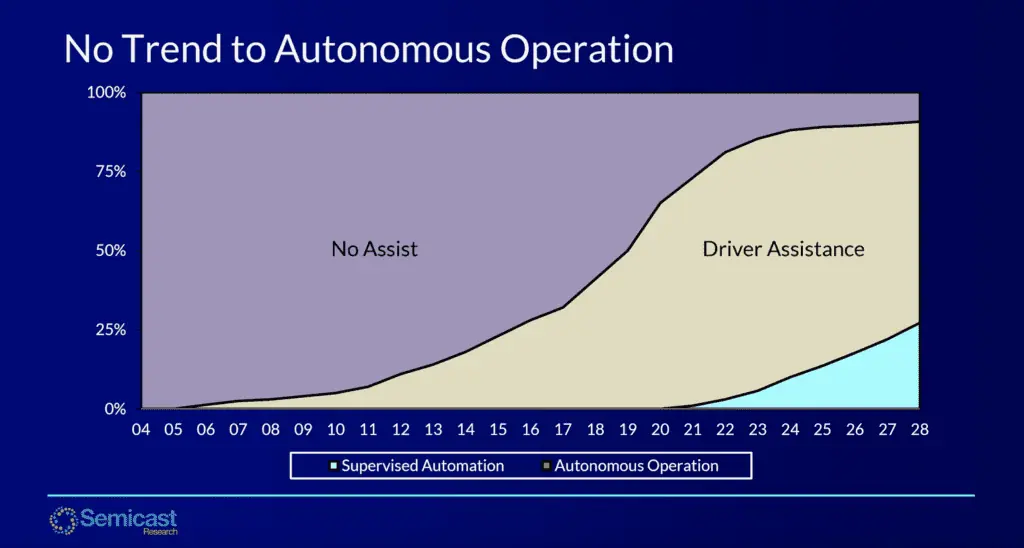

Let’s map these vehicle operational modes onto global light-vehicle production volumes specifically for privately owned passenger vehicles, as shown in the chart below covering the 2004–2028 period. (Note: the analysis intentionally excludes robotaxis, low-speed autonomous passenger shuttles, autonomous trucks, etc.)

The conclusion is obvious: There is no trend to autonomous operation in privately owned passenger vehicles, at least throughout this decade. Driver assistance, yes. Supervised automation (shown on the chart in blue), yes. But autonomous operation in consumer vehicles? No.

Thus, for the foreseeable future, humans will be required to fulfill the task of automation supervisor. This means automakers will be required to install robust DMS to keep humans engaged in the supervisory role, thereby preventing automation complacency. Which, according to a recent report from Consumer Reports, is precisely what drivers want.

Silicon Catalyst in collaboration with the Ojo-Yoshida Report offers a webinar on ADAS at 8:30AM PDT, Wed., July 27: How Safe Is Today’s ADAS?

So, I’d advise automakers seeking to demonstrate their automation competency to focus their R&D efforts on supervised automation systems mated with robust driver monitoring. Think GM Super Cruise and Ford BlueCruise, rather than Tesla’s Full Self-Driving.

Regulatory and advisory bodies that seek to enhance road safety are advised to focus legislation onto driver monitoring and driver assistance technology, to make human drivers safer by mitigating distraction, drowsiness and impairment.

And investors that backed self-driving companies and LiDAR suppliers to the tune of billions are advised to revisit the meaning of the term caveat emptor.

Colin Barnden is principal analyst at Semicast Research. He can reached at colin.barnden@semicast.net.

Copyright permission/reprint service of a full Ojo-Yoshida Report story is available for promotional use on your website, marketing materials and social media promotions. Please send us an email at talktous@ojoyoshidareport.com for details.