By Colin Barnden

What’s at stake:

Argo AI was shut down in October 2022 and Cruise has paused all driverless operations. Is the future of AV technology a broad highway, or a cul-de-sac?

What is the point of AVs? Really, if AVs are the solution, then what is the problem? We know the answer to that question because the AV developers keep telling us. AVs will save lives and provide accessible and affordable transportation to disabled and disadvantaged citizens.

So, investors in Cruise, Waymo, and other AV developers have collectively dropped tens of billions of dollars to fund what might be called Autonomous Altruism?

The raison d’etre of AV developers is to make money for their investors.

Seriously? No. That was just the PR spin for the benefit of the lawmakers and regulatory agencies to get AVs onto public roads in the first place. The true goal for AVs is revealed simply by listening to any recent GM investor conference and hearing CEO Mary Barra talk about the business opportunities.

The raison d’etre of AV developers is to make money for their investors. This is the defining characteristic of any business, and it is time to call BS on the “saving lives” and “accessible transportation” narratives.

AVs are safer than humans?

AVs operated on public roads pose a safety risk to the general public, but no U.S. city or state has held a democratic vote to ask its residents if that is a risk they agree to take. That decision has been made on their behalf, and obviously under the influence of lawyers and lobbyists acting for the AV developers.

There are very serious ethical questions to be answered here, which no one is talking about. That is because there is no opportunity for a rational debate to take place about how best to move forward. The AV developers continue to push a message of autonomous altruism, enabled by a crowd of commentators that might best be called “Autonomous Apologists.”

The Apologists also dismiss the short-term risks posed by operating AVs on public roads by focusing on the long-term societal benefits. Common is the assertion that AVs are inevitable, and AV deployment is a question of when, not if.

Suddenly stated as an axiom is that AVs are safer than a human driver, even in the absence of any obviously impartial third-party data to confirm it. What could be the source of that conclusion?

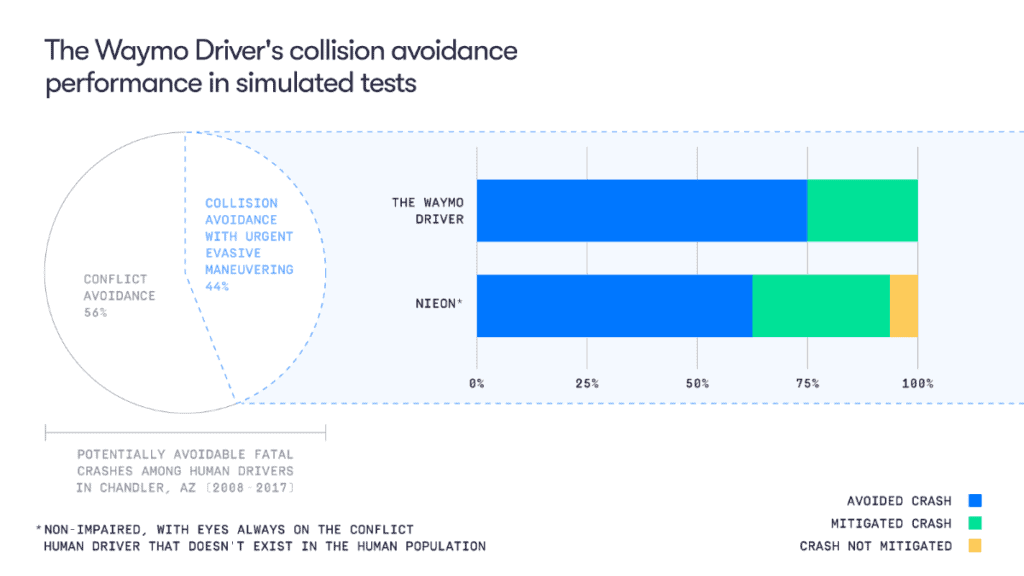

Last year Waymo published a blog post on safety in which it described a reference model that represents an ideal human state for driving. Called “NIEON” it models the response time and evasive action of a human driver that is non-impaired, “with eyes always on the conflict.”

Unsurprisingly, Waymo concluded that:

The Waymo Driver outperformed the NIEON human driver model by avoiding more collisions and mitigating serious injury risk in simulated fatal crash scenarios.

Ergo, AVs are safer drivers than humans, and public roads are safer with AVs than without. That narrative was successfully gaining ground right up until a Cruise robotaxi dragged a pedestrian 20 feet along a San Francisco street.

Cruise’s and Waymo’s robotaxis in San Francisco are 4-8x more likely to be involved in non-fatal crashes.

Missy Cummings

The underlying problem with AV safety is described by Missy Cummings, a professor at George Mason University, who is considered an international expert and leader in the field of autonomous systems. Cummings frequently observes that AVs cannot reason under uncertainty in the ambiguous and unexpected scenarios which happen all the time on public roads.

In a research paper entitled Assessing Readiness of Self-Driving Vehicles, Cummings observes: “Cruise’s and Waymo’s robotaxis in San Francisco are 4-8x more likely to be involved in non-fatal crashes.”

By operating at relatively low speeds on city streets and packed with so many lidar sensors and so much compute processing, robotaxis can avoid most fatal crash scenarios by jamming the brakes on faster and harder than a human driver can.

But robotaxis simply do not yet understand the subtlety and nuance of human behavior. Cummings’ warnings of non-fatal crashes, while prescient, were ignored in favor of the unverified narrative that AVs are safer than humans, provided by the AV developers themselves.

Are AVs safer than humans? Cummings says not, and as of this writing Cruise has suspended all driverless operations in the U.S. and is barred from operating driverless rides in the state of California.

Making human drivers safer

An alternative to replacing human drivers with AVs is simply to make human drivers safer, which is the approach being followed in Europe with its updated General Safety Regulation.

There are many ways U.S. regulators could make human drivers safer, some possibilities include:

- Better driver education and improved licensing standards.

- Enforcing speed limits and red light violations.

- Lifetime bans for drunk driving.

- Making personal vehicles smaller and lighter.

- Mandating high performance automatic emergency braking, also known as AEB.

- Mandating direct driver monitoring systems, or DMS, to detect driver drowsiness, distraction, and impairment.

AV developers clearly want U.S. lawmakers and regulatory agencies to prioritize safety on public roads by giving carte blanche to the rollout of AVs. But public safety isn’t solely about which companies have the most money to spend on lawyers and lobbyists to create their preferred outcome.

AV developers are businesses seeking profits, not autonomous altruists looking to make the world a safer place. In going too far, too fast, Cruise has reminded everyone of the risks of replacing humans as drivers, and the motives of the companies working to do so.

Bottom line:

Nowhere is it written down that AV developers have a right to exist, nor that public roads and members of the public exist to beta test experimental AV technology. Public roads are shared spaces, and public servants are in office to serve the public, not AV developers.

Colin Barnden is principal analyst at Semicast Research. He can be reached at colin.barnden@semicast.net.

Copyright permission/reprint service of a full Ojo-Yoshida Report story is available for promotional use on your website, marketing materials and social media promotions. Please send us an email at talktous@ojoyoshidareport.com for details.